We are deploying OpenSearch in a Kubernetes environment with three pods — one each for the client, manager, and data roles.

Currently, we are not ingesting any data into OpenSearch, and there are no indices present in the system.

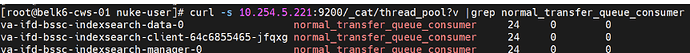

However, we’ve observed that a thread pool named normal_transfer_queue_consumer is active, with 24 threads running on each pod.

Here normal_transfer_queue_consumer is always active and it’s count is 24. Will there be any performance impact ? Any reason why the thread count is 24.

Below is the resource configuration used for opensearch pods

manager:#cpu: “1”

data:#cpu: “1”

client:#cpu: “1”

Is there any parameter to changes this number of thread normal_transfer_queue_consumer during startup?

There is any parameter to changes this number of thread normal_transfer_queue_consumer during startup?

If we reduce number of normal_transfer_queue_consumer threads or disable it (changes to 0), will it have any impact to the system?

Regards,

pablo

August 5, 2025, 11:05am

2

@Varun_Srinivasa Take a look a this pool request. This option has been introduced to support the upload timeouts solution.

main ← vikasvb90:permit_backed_transfers

opened 04:05AM - 05 Feb 24 UTC

### Description

During burst of uploads happening typically in finalize recover… y in cases like shrink, split and force merge where lot of segments or large segments become available for upload, large number of requests get queued up behind connection pool. This either results in timeouts due to failure in acquiring a connection or idle connection timeout where an ongoing request takes too long to read and compute data for upload which is because of high wait time for acquiring a thread in stream reader pool. It is more prevalent in async flow since main thread doesn't wait for the response and everything ends up getting submitted for upload. Both sync and async S3 SDK apis do not have a way today to handle such bursts.

This PR resolves these problems by applying natural backpressure on main thread with the help of backing permits. It also adds retries on future in case of a SDK exception or failure in acquisition of a permit. This means that in case of multi-part upload, a failing part can be independently retried.

Testing

1. During post recovery of a 98gb shard on a r7g.medium box after split of nyc_taxis index, I did not observe any IO timeout. Concurrent execution of so workload benchmark also did not produce any timeout error.

2. No impact on indexing performance on executing benchmarks on main build and build with this PR.

### Related Issues

Resolves #[Issue number to be closed when this PR is merged]

### Check List

- [x] New functionality includes testing.

- [x] All tests pass

- [ ] ~~New functionality has been documented.~~

- [ ] ~~New functionality has javadoc added~~

- [x] Failing checks are inspected and point to the corresponding known issue(s) (See: [Troubleshooting Failing Builds](../blob/main/CONTRIBUTING.md#troubleshooting-failing-builds))

- [x] Commits are signed per the DCO using --signoff

- [ ] ~~Commit changes are listed out in CHANGELOG.md file (See: [Changelog](../blob/main/CONTRIBUTING.md#changelog))~~

- [ ] ~~Public documentation issue/PR [created](https://github.com/opensearch-project/documentation-website/issues/new/choose)~~

By submitting this pull request, I confirm that my contribution is made under the terms of the Apache 2.0 license.

For more information on following Developer Certificate of Origin and signing off your commits, please check [here](https://github.com/opensearch-project/OpenSearch/blob/main/CONTRIBUTING.md#developer-certificate-of-origin).

![]()